Whisper is an open-source speech recognition system developed by OpenAI that transcribes audio entirely on your own hardware, free, private, and accurate enough to rival paid cloud services: no API keys, no usage limits, no audio leaving your machine.

What Whisper Actually Is

Whisper is a deep learning model trained on 680,000 hours of multilingual audio, 77 years of continuous speech pulled from diverse real-world sources rather than clean studio recordings.

That training data is what makes Whisper unusually robust. Most speech recognition systems are trained on high-quality controlled audio and fall apart the moment they encounter a heavy accent, background noise, or a poor microphone. Whisper was trained on all of it. Different accents, varying recording quality, multiple languages, overlapping speech, the model has heard versions of all of these and learned to work through them.

The result is a system that supports over 99 languages, can translate non-English audio directly into English text, generates word-level timestamps for subtitle work, and outputs in multiple formats, including plain text, SRT, VTT, and JSON. You download the model weights once, and they run locally forever.

How Whisper Transcribes Audio

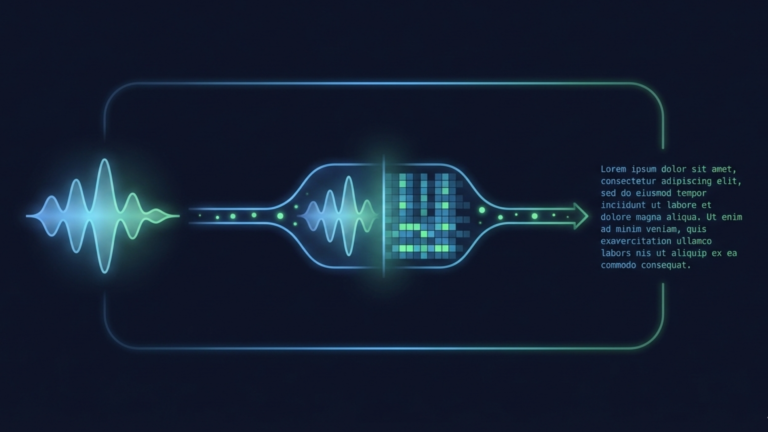

The process starts with your audio file, an MP3, WAV, M4A, or most common formats. Whisper hands it to FFmpeg, which converts it into a standardized 16kHz mono WAV file regardless of what you fed in.

From there, the audio is converted into a mel spectrogram. Think of this as a visual representation of the sound, mapping frequency and time in a way that neural networks can process. Instead of working with raw audio waveforms, the model looks at these spectrograms the way you might look at a page of text.

A transformer architecture, with the same fundamental design behind GPT, then processes that spectrogram. It has learned during training to recognize the acoustic patterns that correspond to phonemes, words, and sentences across dozens of languages. It reads the spectrogram and produces text.

What comes out the other side is a transcription with optional timestamps marking when each segment or word was spoken. The entire process happens on your machine. Your audio file never leaves your hardware.

The Model Family

Whisper is not a single model; it is a family of five, each trading speed for accuracy.

The tiny and base models are fast and lightweight, running comfortably on modest hardware, including older laptops and low-power devices like a Raspberry Pi. They are good enough for casual use, quick tests, or situations where speed matters more than precision.

The small and medium models hit the practical sweet spot for most users. Medium in particular delivers high accuracy on challenging audio-accented speech, background noise, and technical vocabulary while still running on a CPU in roughly real time. A one-minute recording takes roughly one minute to process.

The large model is the most accurate but demands significant hardware. It needs around 10GB of VRAM to run comfortably and is best suited for GPU-equipped machines where forensic-level accuracy matters, such as legal transcription, research interviews, or any audio where errors carry real consequences.

For English-only audio, each model has an .en variant base.en, medium.en, and so on, which is slightly faster because it does not allocate capacity for multilingual support. If you are only ever transcribing English, always use these.

Why Local Matters

The case for running Whisper locally is not just about cost, though the savings are real. Cloud transcription services typically charge between $0.006 and $0.03 per minute. An hour of audio runs $0.36 to $1.80. For heavy users transcribing dozens of hours a month, that compounds quickly into a meaningful expense.

The deeper case is privacy. When you use a cloud service, you are deciding on behalf of every person whose voice appears in that recording. Research subjects, interview sources, and meeting participants, none of them chose to have their speech processed on a third-party server. Local Whisper removes that question entirely. The audio stays on your hardware, under your control, subject to your security practices and nobody else’s.

It also means no internet dependency. Whisper runs completely offline once the models are downloaded. No connectivity, no rate limits, no service outages disrupting your workflow. You own the tool.

The Takeaway

Whisper brings enterprise-grade speech recognition to your own hardware without the enterprise price tag or the privacy tradeoff. The model family covers everything from lightweight transcription on a Raspberry Pi to forensic-level accuracy on a GPU-equipped machine. Your audio stays local, your workflow stays uninterrupted, and the tool is yours permanently. That is the ingredient.