If you’ve spent any time in tech, you’ve heard two terms thrown around for running isolated applications: Docker containers and Virtual Machines. They both solve similar problems, isolating applications and making better use of hardware, but they work in fundamentally different ways.

They are different tools built on different ideas, and understanding the difference starts with understanding what each one actually does under the hood.

The One-Paragraph Answer

A Virtual Machine runs a complete operating system, including its own kernel, on top of your host machine using a piece of software called a hypervisor. It is heavy, slow to start, but fully and strongly isolated.

A Docker container shares the host operating system’s kernel but isolates the application at the process level. It is lightweight, starts in seconds, and uses a fraction of the resources a VM requires.

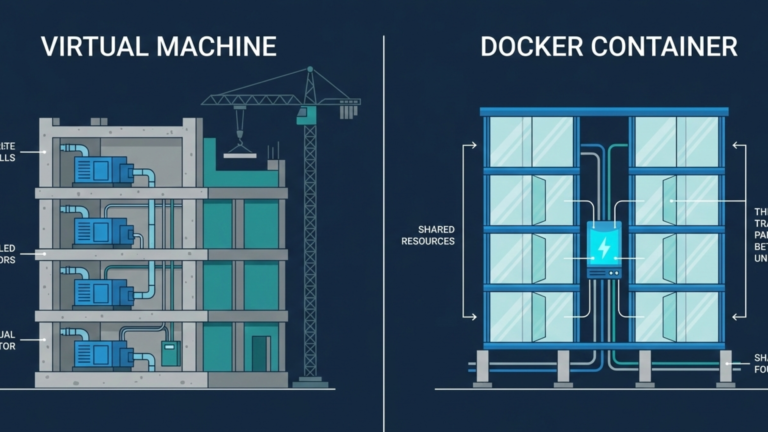

Think of VMs as apartment buildings, each unit has its own foundation, plumbing, and electrical system, completely separate from the others. Containers are condos with shared foundation and infrastructure, but each unit is its own separate living space. One is more expensive to build and maintain. The other is far more efficient to run at scale.

How Virtual Machines Work

A Virtual Machine is an emulation of a physical computer. The hypervisor software, like VMware, VirtualBox, or KVM, sits between the physical hardware and the virtual machines running on top of it, allocating CPU, memory, and storage to each one.

Every VM contains a full operating system with its own kernel, its own drivers, its own system libraries, and its own virtualized hardware. When you run three VMs on a single server, you are running three separate operating systems. Each one boots up, loads its kernel, initializes its services, and maintains its own memory space entirely independent of the others.

This is what makes VMs so strongly isolated. A problem in one VM’s kernel stays inside that VM. But it is also what makes them heavy. Every VM duplicates system processes, consumes gigabytes of memory for its operating system alone, and can take several minutes to boot. The isolation is real, but it comes at a high cost.

How Docker Containers Work

Docker containers take a fundamentally different approach. Rather than emulating hardware and running a separate operating system, containers use features built directly into the Linux kernel namespaces and control groups to carve out isolated environments for each application.

Every container on a host shares the same operating system kernel. What each container gets is its own isolated view of the system, its own filesystem, its own process tree, its own network interface, and its own resource limits. From the perspective of the application running inside, it appears to have the whole machine to itself. But it is sharing the kernel with every other container on that host.

This is why containers start in seconds rather than minutes. There is no operating system to boot, no kernel to load, no system services to initialize. The application simply starts. And because there is no duplicate operating system consuming resources, a single server can run hundreds of containers in the space where it might only manage a handful of VMs.

The trade-off is isolation. Because containers share the host kernel, a serious kernel-level vulnerability affects everything running on that host. VMs do not have this problem; each one is sealed behind its own kernel.

Why the Difference Matters

The resource gap between the two technologies is significant. A VM running a simple web application might require several gigabytes of disk space and a gigabyte or more of memory just to run the operating system underneath it. A container running the same application might need a fraction of that, just enough for the application and its dependencies, nothing more.

This is why containers transformed how modern software is built and deployed. The ability to start hundreds of isolated application instances on a single server, in seconds, with minimal overhead, opened up entirely new ways of thinking about scale.

Can You Use Both?

Absolutely, and many organizations do. Running containers inside virtual machines is common in production infrastructure. The VMs provide strong isolation at the infrastructure level, separating different teams, projects, or customers from each other. The containers provide efficient, fast application packaging and scaling within each VM. You get the security boundaries of one and the density of the other.

The Takeaway

Virtual Machines virtualize the hardware to run complete, independent operating systems. Containers virtualize the operating system to run isolated application processes. VMs are heavier, slower, and strongly isolated. Containers are lighter, faster, and share the host kernel. Neither is better in absolute terms; they solve different problems at different levels of the stack. Once you understand what each one actually is, choosing between them stops being confusing and starts being obvious.